AI tools are often treated like chat interfaces: ask a question, receive an answer, repeat. That model is useful, but it leaves a tremendous amount of capability untapped.

A powerful way to think about AI is not as a conversational partner, but as a callable capability inside a broader system of tools. When AI can be invoked from the command line, it becomes something entirely different: a programmable component in a workflow.

In other words, the AI stops being the interface and starts becoming an instrument.

The Shift: From Chat to Workflow

Many modern AI systems—including tools like GitHub Copilot CLI—can be invoked from the command line. Once you can run an AI prompt as a command, a new possibility emerges:

- AI prompts can be scripted.

- AI tasks can run in parallel.

- AI outputs can be structured and assembled into artifacts.

- AI can become a worker inside a larger pipeline.

This opens the door to something closer to meta-cognition: thinking not just about the answer, but about how the system should think.

Instead of asking:

“What is the answer?”

You start asking:

“What is the best process to generate the answer?”

That shift changes everything.

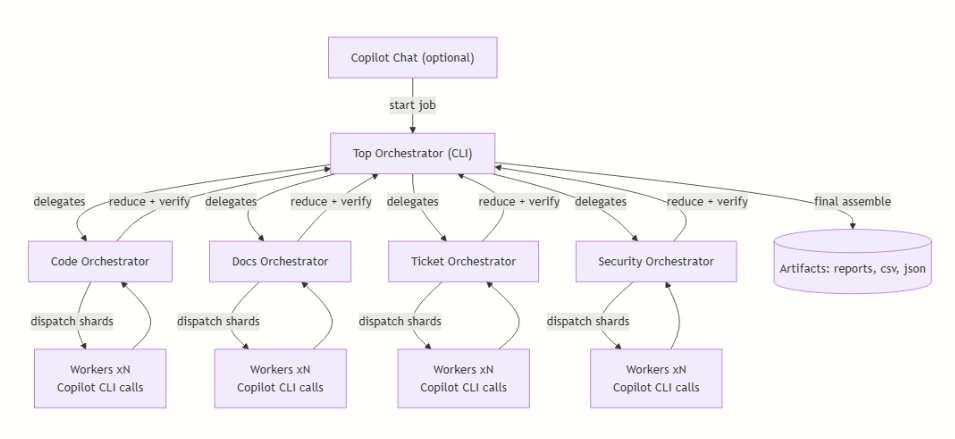

An Orchestrated AI Workflow

One practical architecture for this approach is shown below.

Solving the Context Window Problem

One of the most widely discussed limitations of AI systems is the context window. Large codebases, datasets, or document collections often exceed what a single prompt can reasonably process.

Command-line orchestration offers a simple but powerful workaround: parallel decomposition.

Instead of sending a massive corpus to a single prompt, the orchestrator can:

- Split the input into meaningful shards.

- Dispatch those shards to multiple AI worker processes.

- Merge the results through a structured reduction step.

This approach resembles distributed computing models like MapReduce, but applied to reasoning tasks. Each worker processes a slice of the problem independently, allowing the system to effectively reason about corpora far larger than the model’s nominal context window.

In practice, this means an AI workflow can analyze:

- thousands of source files

- large documentation spaces

- extensive ticket histories

- operational log datasets

All by distributing the cognitive workload across many smaller prompts.

Domain-Specific AI Workers

Another advantage of this model is specialization.

Rather than relying on a single general-purpose prompt, workflows can create domain-oriented orchestrators that coordinate their own worker pools.

For example:

Code Orchestrator

- analyzes repositories

- generates architecture summaries

- detects coupling and complexity hotspots

- proposes refactoring opportunities

Documentation Orchestrator

- extracts requirements from documentation systems

- builds domain glossaries

- generates onboarding documentation

Ticket Orchestrator

- summarizes issue backlogs

- identifies recurring operational themes

- clusters related problems across time

Security Orchestrator

- scans code patterns

- surfaces potential vulnerabilities

- cross-references dependency alerts

Each orchestrator applies different prompts, chunking strategies, and verification rules, but they all rely on the same underlying capability: an AI tool that can be invoked programmatically from the command line.

The intelligence of the system does not come from a single model doing everything. It comes from how the reasoning tasks are organized and coordinated.

Reduce, Verify, and Produce Artifacts

Parallel AI workers inevitably produce overlapping or partially inconsistent outputs. Simply concatenating their responses would produce noise rather than insight.

A well-designed orchestrator therefore performs a reduction step that:

- deduplicates overlapping findings

- resolves contradictions between workers

- verifies claims against deterministic checks

- assembles a coherent result from many smaller analyses

At this stage the system transitions from raw reasoning to usable outputs. The goal is not just to answer a question but to produce artifacts that engineers can interact with directly.

Typical outputs might include:

- architecture reports

- CSV exports of generated test cases

- JSON summaries of system dependencies

- markdown documentation

- traceability matrices linking requirements to code

These artifacts can be stored, versioned, reviewed, and integrated into existing engineering workflows. In many cases they become the real product of the AI pipeline.

Meta-Cognition in Engineering Workflows

What makes this approach powerful is that it pushes engineers toward meta-cognitive thinking.

Instead of treating AI as a single thinking partner, we begin designing systems that think.

We decide:

- how problems should be decomposed

- how reasoning tasks should be distributed

- how outputs should be verified

- how knowledge should be assembled

The AI becomes one component inside a larger cognitive architecture.

In that sense, the most valuable skill is no longer prompt writing—it is workflow design.

The Art of the Possible

Once AI becomes callable infrastructure, the design space opens dramatically.

Workflows like the one described above can analyze entire codebases, synthesize knowledge from thousands of issue tickets, generate test cases from documentation, and produce architectural maps of complex systems.

The striking part is that none of these capabilities depend on new models or larger context windows. The underlying AI is often exactly the same tool people use interactively every day.

What changes is the structure around it.

When AI becomes a programmable component rather than a conversational endpoint, its limitations become manageable engineering constraints instead of hard boundaries. Context limits can be bypassed through parallel decomposition. Verification steps can be inserted where accuracy matters. Specialized orchestrators can apply different reasoning strategies to different domains.

In other words, the breakthrough is not in the intelligence of the model. It is in the intelligence of the workflow.

And once you start thinking at that level—once you begin designing workflows that orchestrate reasoning rather than simply requesting it—the possibilities expand rapidly.

That is where the real opportunity lies.

Not in asking better questions.

But in designing better systems of questions.

Leave a comment